Enterprise codebases will never fit cleanly into an AI context window, and the limits are structural rather than engineering problems waiting on the next model release. Scaling AI coding agents at system level requires a structured, continuously maintained representation of the system itself, exposed to the model as a queryable map and governed by lifecycle gates that prevent "almost-right" code from quietly breaking production.

A staff engineer points an AI agent at a production repository, asks it to add a feature that touches three services, and gets back a confident, well-formatted patch. The patch reads beautifully. It calls an internal API that does not exist, assumes a deprecated event schema, and bypasses a rate limiter that exists for a reason discovered three years ago in an incident nobody on the current team remembers.

This is not a model failure. It is not even a prompt failure. It is a category error.

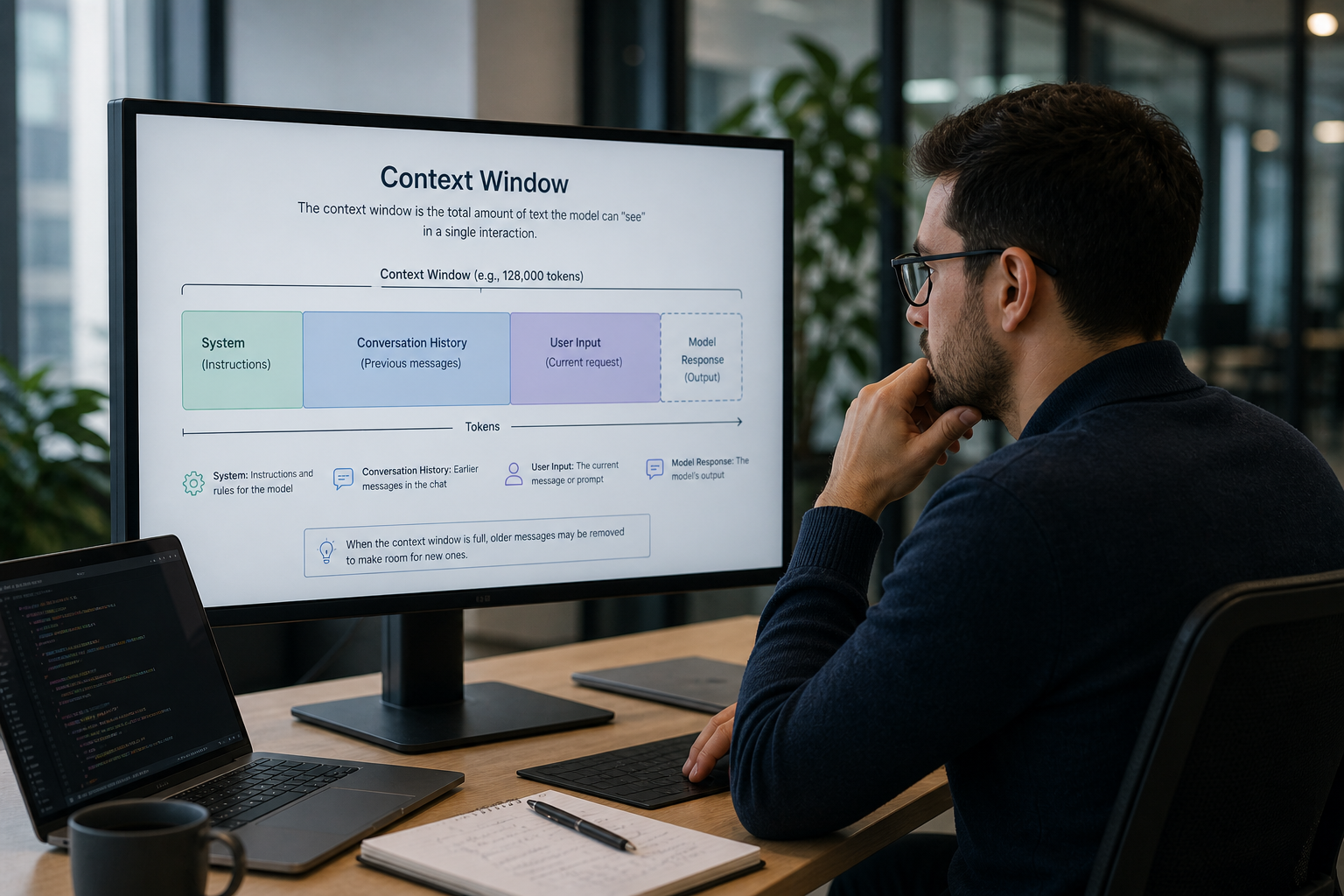

The seductive idea behind most AI coding workflows is that if we just stuff enough of the codebase into the model, the answers will improve. Bigger context windows, smarter retrieval, more tokens. The story goes that we are a few quarters away from a model that "sees the whole system."

That story is wrong, and it will keep being wrong no matter how many tokens the next model accepts. Here is why, and what to build instead.

"Just give it more context" is a tempting mental model

The intuition is reasonable. If a coding agent generates almost-right code because it does not understand the system, then giving it more of the system should fix the problem. Demos reinforce this idea. A small repo plus a clear prompt produces an impressive result. So we extrapolate: scale the inputs, scale the outputs.

In practice, that extrapolation collapses on contact with brownfield reality. The systems that matter, the ones that run real businesses, are not small repos with clean READMEs. They are years of decisions encoded in code, configuration, infrastructure, runbooks, design docs that drifted, and people's heads.

Production software is not a file tree. It is a knowledge graph that happens to be partially expressed as files.

Tokens were never a knowledge unit

A token is a slice of text the model uses to predict the next slice of text. That is the contract. A token is not a fact, not a relationship, not a decision, and not a constraint. The fact that we can paste a .py file into a context window does not mean we have given the model the meaning of that file.

When an experienced engineer reads a function, they are not just parsing the characters. They are pulling on a much larger structure: the architectural pattern this module belongs to, what calls it and what it calls, the data shapes flowing through it, the operational behavior under load, the historical reason a particular branch is shaped that way. Most of that structure is not in the file. It lives in the relationships between files, between repositories, between systems, and between the people who built them.

This is the gap we have written about before in the context of tribal knowledge: the code is the artifact, not the system. The system is the set of relationships that produced the code, and those relationships are mostly invisible from any single file.

So when we push more tokens at a model, we are not giving it more system understanding. We are giving it more text. The two are not the same thing.

Three fundamental limits, not three engineering limits

Engineers tend to assume any limit is an engineering limit. Wait six months and the next model will fix it. That assumption breaks down here. The constraints are structural, not implementation details.

Scale is not where it ends

Yes, a real enterprise codebase is bigger than a current context window. A modest microservices estate is millions of lines across dozens of repositories. Add infrastructure as code, runbooks, ADRs, internal libraries, and the institutional Confluence rot, and you are well past any commercially available window. Industry surveys of large codebases consistently show that even single-repo monoliths now run into the tens of millions of lines, and most enterprises have many.

But scale is the least interesting limit. Even if the model accepted 100 million tokens tomorrow, two harder problems remain.

Structure does not survive flattening

A context window is a flat sequence of tokens. A software system is a graph: services that depend on services, modules that import modules, schemas that bind to schemas, deploys that ride on infrastructure that ties to identities. When you flatten a graph into a sequence, you lose the relationships. The model can still see the nodes, but it has to reconstruct the edges from text proximity. That reconstruction is exactly where "almost right" comes from.

Imagine handing someone the alphabetized list of every street name in a city and asking them to plan a delivery route. They have all the data. They have none of the structure.

Attention degrades with distance

The third limit is the most under-discussed. Long-context models do not pay equal attention to everything in the window. Stanford and Berkeley researchers documented this in Lost in the Middle: performance drops sharply for information placed in the middle of a long context, even when the information is exactly what the question requires. The pattern is robust across model families and prompting strategies.

The implication is uncomfortable. Even when the right context is technically inside the window, the model often behaves as if it were not there. Bigger windows do not guarantee bigger comprehension. They guarantee bigger surface area for missed retrievals.

These three limits compound. Your codebase is too big, the relationships do not survive the format, and the model's attention is unreliable at the scales we are trying to push it to. No single hardware generation undoes that.

The "almost-right" tax

When you operate inside this gap, the cost shows up as a specific failure mode that is harder to catch than outright wrong code. The output compiles. It passes obvious tests. It looks like something a competent engineer might write. And it is subtly wrong in ways that violate invariants the codebase enforces but does not state.

Almost-right code is the most expensive kind of wrong. The reviewer has to reconstruct the system context the AI did not have, then check the AI's work against that mental reconstruction, for every change. Multiple industry surveys, including the Stack Overflow Developer Survey, show that developer trust in AI-generated output has been declining even as AI tool adoption has risen. That gap is not irrational. It is engineers responding to the failure mode they keep encountering.

The downstream costs are predictable: more PR review time, more rollbacks, more post-incident archaeology, more architectural drift as small wrong-shaped changes accumulate. The productivity paradox of AI in engineering is largely this tax compounding.

What to build instead: a structured representation of the system

If the problem is that tokens are not a knowledge unit, the answer is not to push more tokens through the same hole. It is to build the knowledge unit explicitly, outside the model, and let the model query it.

In practice that means constructing a structured representation of the system itself. Not a wiki. Not a vector index over READMEs. A live, versioned, machine-readable model that captures what an experienced engineer would tell you on a whiteboard:

- The architecture: services, components, the boundaries between them

- The dependencies: what calls what, what reads what, what depends on what version of what

- The data shapes: schemas, contracts, the events that flow between systems

- The infrastructure context: where things run, what scales them, what protects them

- The decision history: why this pattern, what was rejected, what constraints applied

- The operational behavior: SLOs, known failure modes, runbooks, the things that break at 3 a.m.

This representation is constructed from the system, kept in sync with the system, and exposed to AI tooling as the source of truth the system never had in one place. We have written previously about why a semantic layer is the missing component for AI coding agents; this is the same idea taken seriously.

The shift is from "stuff the codebase into the prompt" to "give the model a queryable map." The model does not have to hold the system in its head. It does not have to reconstruct the graph from a sequence of tokens. It asks for the parts it needs, gets structured answers, and reasons over those.

What good looks like in practice

A useful representation has four properties.

It is built, not assumed. The graph is extracted from the running system: source code, infrastructure as code, observability data, ADRs, schemas, deploy configurations. Tribal knowledge gets surfaced into machine-readable form rather than living in three senior engineers' heads.

It is continuously maintained. As the system evolves, the model evolves with it. A representation that goes stale is worse than no representation; it gives confident wrong answers. The maintenance has to be automated and triggered by the same events that change the system itself.

It is queryable, not just searchable. The model is not asking "which file mentions UserService," it is asking "what depends on UserService.authorize, what does that method assume about the session token, and what tests cover that path." Those are graph queries, not keyword searches.

It is governed. Because AI is now operating with system-level understanding, the gates that prevent drift have to operate at system level too. Architectural invariants, deployment policies, and integration contracts are not hopes; they are checks the lifecycle enforces before code is merged. This is the difference between AI that suggests and AI that ships safely.

This is the structural argument behind why raw coding agents are not a software development lifecycle, and it is the foundation under our AI-Managed Software Lifecycle service. The semantic representation, the supervised gates, and the lifecycle integration are not three nice-to-haves. They are the minimum viable unit for AI to operate on a real codebase without paying the almost-right tax.

What this means for engineering leaders

The takeaway is not that AI coding tools are useless. They are useful, on small surfaces, with a competent reviewer in the loop. The takeaway is that scaling them up by feeding them more of the codebase will not work, no matter how patient you are about waiting for the next context window.

If you want AI to operate at system scale, you have to build the system representation it operates on. Either you build that capability internally, complete with the ingestion, the ongoing maintenance, the gates, and the lifecycle integration, or you operate it as a managed service. Both are valid choices. Pretending the problem is going to be solved by the next model release is not.

The bottleneck has moved. It is no longer the model's reasoning ability. It is the absence of a structured map of the systems we are asking it to reason about. Gartner's analysis of agentic AI projects, summarized in their published research at gartner.com, points to a similar conclusion from the failure side: the projects that are quietly being canceled in the next two years share this gap. They tried to scale AI through the model. The ones that survive are scaling it through the system representation.

Build the map, or operate without it. Those are the choices. Almost-right code at production scale is not.