- AI agents replace process and admin work. They don't assist with it or speed it up incrementally. They eliminate manual tasks entirely.

- Most companies don't need an AI strategy. They need a process deletion strategy. AI agents are the tool that makes deletion possible.

- Previous automation fails because it needs rigid rules and structured inputs. Most admin work has neither.

- Your team must be AI Native before attempting AI Transformation. Without foundational AI literacy, pilots fail, trust erodes, and the cycle repeats.

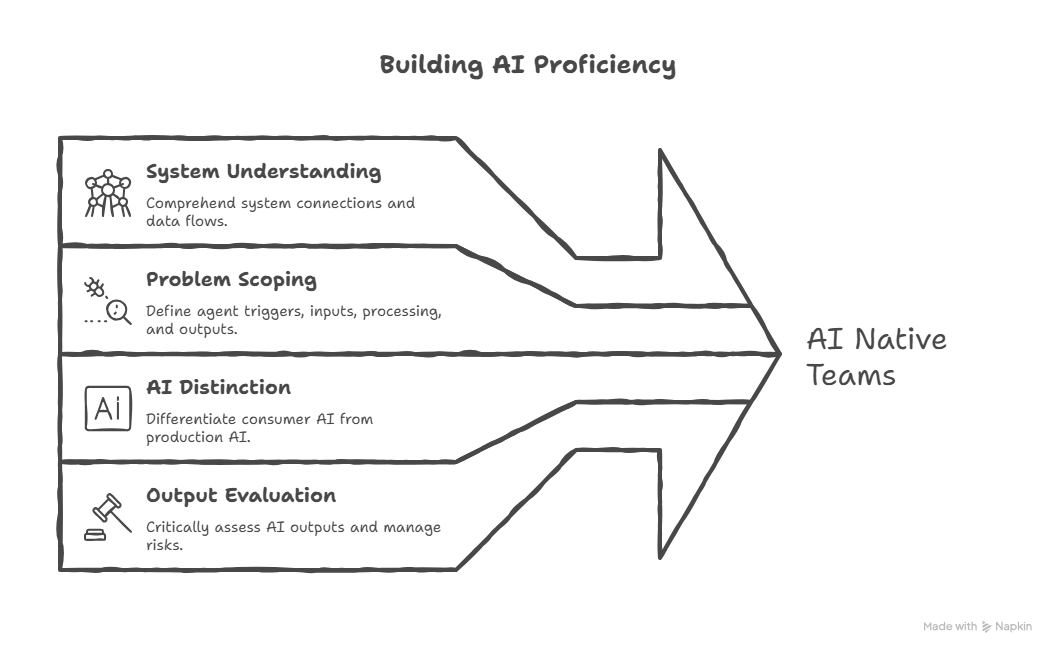

- AI Native means four things: understanding how systems connect, scoping problems for agents, distinguishing consumer AI from production AI, and evaluating outputs critically.

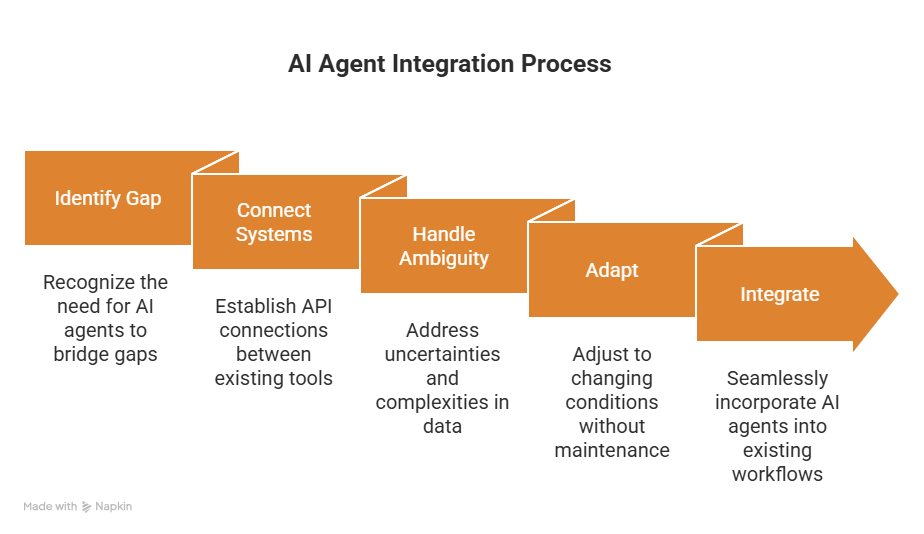

Most organisations are buried in admin that adds little value. Traditional automation failed because real work needs context, judgement, and coordination across tools. AI agents can now handle this, but only if teams are AI native. Most AI pilots fail due to lack of understanding, not bad tech. This blog explains CloudGeometry’s five step framework (Brief, Tooling, Sprint, POC, Adoption) which cut admin by 63% and recovered 40+ hours a week.

Every Monday, your Head of Ops spends six hours pulling data from four platforms, copying it into a spreadsheet, formatting it into a report, and sending it to leadership. By the time it lands, half the numbers are already stale.

That report doesn't need a better template. It doesn't need another dashboard. It needs to not exist as a manual task at all.

AI agents can replace work like this entirely. Not assist with it. Not shave 20% off the time. Replace it. The organisations that figure this out first will operate at a fundamentally different speed. The rest will keep losing hours to processes that should have died years ago.

Most companies don't need an AI strategy. They need a process deletion strategy. AI agents are just the tool that makes deletion possible.

Why Previous Automation Hasn't Solved This

Most organisations have already taken a run at this. RPA, workflow tools, internal dashboards, Zapier integrations. These work well for structured, predictable tasks with clean inputs and rigid rules.

Most process and admin work doesn't fit that mould. It involves context, judgement, unstructured data, and cross-platform orchestration. A workflow tool can route a form submission. It can't read a meeting transcript, extract action items, create project tickets, and notify the right people.

AI agents fill that gap. They handle ambiguity, work across systems through API connections, and adapt without constant maintenance. They sit on top of your existing tools and data, connecting them in ways that weren't practical before.

That's the capability. But capability without readiness is just an expensive experiment.

The Catch: You Need to Be AI Native First

This is where most organisations fail, and it's the single biggest reason AI investments don't pay off.

Research from MIT's GenAI Divide report found that 95% of enterprise AI pilots fail to deliver measurable impact. The root cause isn't model capability. It's what the report calls a "learning gap" between tools and organisations. Flawed enterprise integration. A lack of organisational readiness. Teams that can use AI but can't build with it.

We've seen this firsthand. A mid-market services company launched an AI pilot to automate client reporting. The demo was impressive. Leadership signed off. Three weeks into production, the agent started pulling from the wrong data sources, formatting outputs inconsistently, and missing edge cases nobody had scoped for. Within a month, the ops team had reverted to their spreadsheets. Trust in AI internally dropped to zero.

The pilot didn't fail because the technology was wrong. It failed because the team didn't understand the systems well enough to brief, test, or maintain what they'd built.

That's the pattern. And it repeats until the underlying problem is addressed.

The mistake is jumping straight to "AI Transformation" without first becoming "AI Native." These are not the same thing. AI Transformation is the outcome: agents handling real work, systems connected, processes replaced. AI Native is the prerequisite: your team having the foundational understanding to make that transformation stick.

Being AI Native means your teams can do four things:

Understand how systems connect. Not write code. Understand the plumbing. APIs, data flows, platform integrations. Well enough to brief someone who does write code.

Scope a problem for an agent. Break a manual process into a trigger, inputs, processing, and output. Articulate what the agent does, what it doesn't, and how you'll know if it's working.

Distinguish consumer AI from production AI. There is a significant gap between using ChatGPT to draft an email and building an agent that runs a workflow autonomously. AI Native teams understand that gap and know what sits on each side of it.

Evaluate outputs critically. Not blindly trust AI-generated results. Understand hallucination risks. Design guardrails that keep agents reliable.

Without this foundation, you get the same cycle: promising POC, broken production, eroded trust. Repeatedly.

The fix requires intentional investment. Structured training like our AI Agent Crash Courses. Hands-on workshops. Embedding experienced practitioners alongside your team. The goal is getting your people to a baseline where they can participate meaningfully in AI projects, not just observe them.

Once that foundation is in place, everything compounds. Each agent your team builds makes the next one easier. The patterns carry over. The skills carry over. The infrastructure carries over.

A Repeatable Framework for Building AI Agents

At CloudGeometry, we've used the following five-step framework to scale AI agents across our own operations. The numbers: 63% reduction in internal admin and process friction in Q1 2025. Over 40 hours recovered per week across the team. That time redeployed into revenue-generating work. The cost of building these agents was a fraction of what we'd have spent on additional headcount or SaaS licenses to manage the same workload.

Step 1: Build the Brief

Every agent starts with a detailed brief. Problem, scope, success criteria, and technical requirements. The key is specificity. A vague brief produces a vague agent.

Define the exact process being replaced. The trigger that starts the workflow. The data sources involved. The expected output. Use AI to help you build this. Feed your LLM the context of the problem and have it stress-test assumptions, identify gaps, and structure the requirements.

A strong brief covers: the problem statement and business impact, a clear user story, V1 scope with an explicit "no" list to prevent scope creep, acceptance criteria, and no more than three success metrics with hard baselines and targets.

The output is a single document. Source of truth for the entire build.

Step 2: Define Tooling and Requirements

With the brief locked in, assess the technical requirements. What APIs are needed? What platforms does the agent connect to? What does the user interaction look like?

This is where you make a critical shift: move away from consumer-facing AI tools for development. Browser-based AI chat is great for exploration. Building production agents requires CLI-based development tools like Claude Code, Gemini CLI, or OpenAI Codex. These tools can write and execute files, orchestrate scripts, and connect systems together, enabling real multi-step workflows.

The principle is simple. The deterministic parts of your system (commands, API connections, rules) should be governed by defined logic, not left to AI interpretation. AI handles the generation layer. Everything else runs on reliable, repeatable code.

Worth noting: most of what gets called an "AI agent" in practice is closer to a deterministic workflow with an AI generation layer than a fully autonomous system. That's not a limitation. It's a feature. We wrote about this distinction in detail in You May Not Be Building an AI Agent, and That's OK. Understanding which architectural pattern fits your problem is one of the most leverage-heavy decisions you'll make.

That separation is the difference between a demo that impresses and a system that runs in production for months without breaking.

Step 3: Plan and Sprint

Assemble your team. Brief the requirements. Plan your sprints. Align stakeholders. With Steps 1 and 2 done properly, this moves fast. A proof of concept should take two weeks. If it's taking longer, something upstream is missing.

The speed comes from front-loaded rigour. Tight brief and clear technical requirements before anyone starts building. That eliminates the back-and-forth, scope creep, and miscommunication that kills most AI projects.

Step 4: Achieve POC and Clear the Path to V1

The proof of concept validates that your agent works in a controlled environment. The jump to V1 is about removing barriers: edge cases, error handling, integration stability, user experience.

This is where most AI pilots die. Teams celebrate the POC and underestimate what production requires. Build in time for testing against real-world variability, not just the happy path. Define what "good enough for V1" looks like early. Resist adding features before your team has used it.

A POC might take one person two weeks. V1 might take a small team another two to four weeks. Compared to the cost of the manual process it replaces, the payback period is measured in weeks, not months.

Step 5: Drive Team Adoption

An agent nobody uses is a failed project. Regardless of how well it's built.

Adoption requires intentional rollout: training, documentation, feedback loops, visible sponsorship from leadership. Start with the team closest to the problem. Let them pressure-test the agent, surface issues, build confidence. Then expand.

Track adoption from day one. How often is the agent triggered? How much time is it saving? Are people reverting to manual processes? These signals tell you whether you have an adoption problem or a product problem. The fix is different for each.

What This Looks Like in Practice

Using this framework, our team has built and deployed agents that handle work previously spread across multiple people and platforms:

Personalised email generation. An agent embedded in Slack that pulls contact data from our CRM, builds context from company and industry fields, generates tailored outreach, and delivers it ready to send. What previously took 20-30 minutes per email now takes a single prompt and a quick review.

Meeting transcript to Jira agent. Calls finish, action items appear as tickets, updates post to the relevant channels. Before this, action items were manually logged, if they were logged at all. Now nothing falls through the cracks.

Marketing collateral generation. One-pagers, checklists, whitepapers from a single prompt. Formatted to brand guidelines. Collateral that used to take a day to produce is done in minutes.

None of these required a large engineering team. All of them followed the same five steps.

The Shift Is Already Happening

The question isn't whether AI agents will replace process and admin work. It's whether your organisation builds the capability now or spends the next two years watching competitors move faster.

The framework is straightforward. The tools are available. The gap is execution. That starts with making your team AI Native and giving them a structured path to build on.

Getting there is possible internally. It's also slow, and most organisations underestimate how much the learning curve costs in failed pilots and lost momentum. If you want to compress that timeline, work with a team that's already done it. We've built the agents, made the mistakes, and refined the framework through production use, not theory.

If you want to see this in practice or explore what AI-first operations look like for your organisation, we're happy to walk you through it.